AIVidPipeline Blog

AI Video Production Blog

Tutorials, tool comparisons, and workflow guides covering the full AI video pipeline — from script to publish.

Seedance 2.0 Availability 2026: CapCut, China API, and Region Status

Updated April 2026. See where Seedance 2.0 is actually available across CapCut, Volcengine / ModelArk, and BytePlus, plus current API and face-workflow limits.

Seedance 2.0 Real Human Face Rules 2026: Official Limits

Updated April 2026. Official docs from CapCut, BytePlus, and Volcengine show Seedance 2.0 still restricts real-human-face workflows and favors virtual characters.

Google Flow + Veo Guide 2026: What Google's AI Filmmaking Stack Actually Includes

What Google Flow actually includes in 2026: Veo-powered generation, camera controls, SceneBuilder, plan requirements, and where Flow fits relative to Runway, Pika, and CapCut.

Kling 3.0 Motion Control Guide: Element Binding for Character Consistency

Complete guide to Kling 3.0 Motion Control and Element Binding. Industrial-grade facial consistency, multi-shot sequences, mocap-level animation, and ComfyUI integration.

Pika AI Selves Guide 2026: Persistent AI Avatars That Post for You

Complete guide to Pika AI Selves in 2026. Create persistent AI avatars with memory, voice, and personality that auto-post across social platforms. Setup, customization, and use cases.

Seedance 2.0 Copyright Risks 2026: What Creators Should Check Before Commercial Use

Seedance 2.0 is officially live, but creators still need to think about deepfakes, copyrighted characters, training-data opacity, and workflow risk before commercial use.

YouTube AI Content Monetization Guide 2026: Disclosure, Originality, and Low-Risk Workflows

What YouTube’s public rules actually say about AI content in 2026: when disclosure is required, what inauthentic content means, and how to keep AI-assisted channels monetizable.

Best AI Agent Skills for Video Production 2026: 18 Skills Mapped to Your Pipeline

The 18 best AI agent skills for video production in 2026, mapped to 9 pipeline stages. Install commands, compatibility, and workflow guides for Claude Code, Codex, and Cursor.

Best AI Agent Skills for YouTube Creators 2026: Automate Your Channel

The 12 best AI agent skills for YouTube creators in 2026. Scripting, thumbnail generation, SEO optimization, subtitle generation, and multi-platform publishing with Claude Code and Codex.

Best AI Lip Sync Tools 2026: Sync Labs, HeyGen, Rask AI Compared

Compare the best AI lip sync tools in 2026. Sync Labs, HeyGen, D-ID, Rask AI, Pika, and Wav2Lip ranked by accuracy, language support, pricing, and production workflow fit.

Best AI Subtitle Generators 2026: CapCut, Descript, HappyScribe Compared

Compare the best AI subtitle generators in 2026. CapCut, Descript, HappyScribe, OpusClip, Veed.io, and Maestra ranked by accuracy, language support, export formats, and pricing.

Best AI Video Upscalers 2026: Topaz, CapCut, HitPaw Compared

Compare the best AI video upscalers in 2026. Topaz Video AI, CapCut, HitPaw, AVCLabs, and VideoProc ranked by output quality, speed, pricing, and workflow integration.

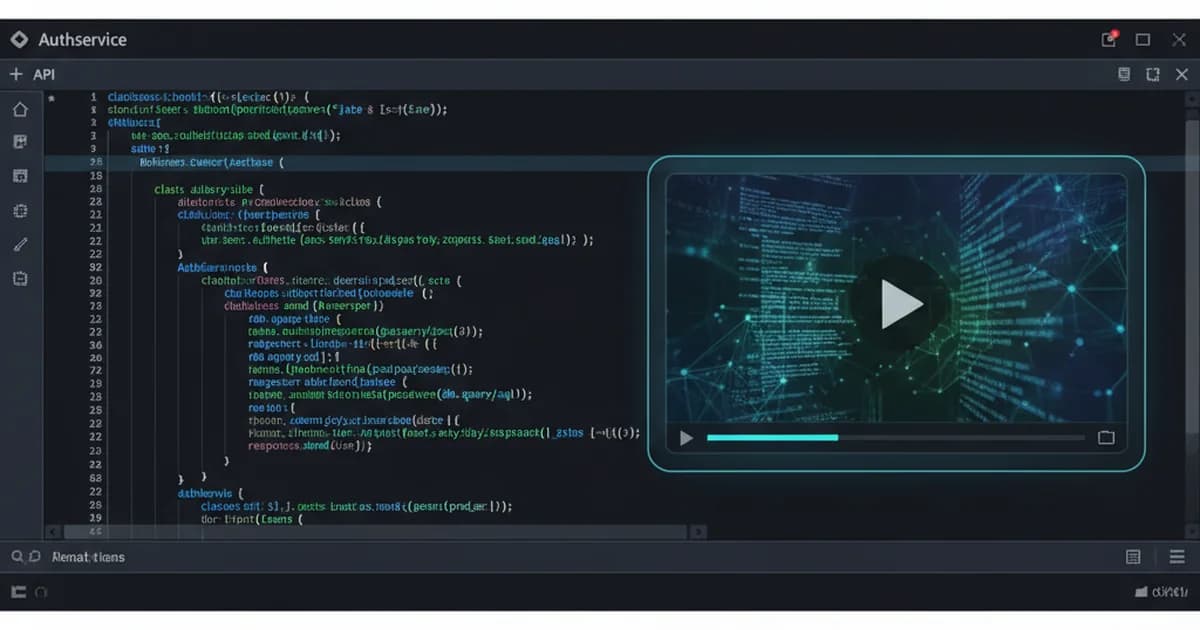

Remotion Agent Skills Guide 2026: Programmatic Video with Claude Code

Complete guide to Remotion agent skills for Claude Code and Codex. Learn programmatic video creation with 28 modular rules, 9 components, and 7 transitions. 126K+ installs on skills.sh.

BytePlus ModelArk 2026: Seedance 1.5 Pro, Seedream 5, and the Current ByteDance Video Stack

As of March 19, 2026, BytePlus is actively surfacing a ByteDance AI stack built around Seedance 1.5 Pro, Seedream 5, and ModelArk. This guide explains what that means for teams evaluating Chinese video-generation infrastructure instead of just single-model demos.

BytePlus VOD 2026: Why Chinese AI Video May Be Winning in the Pipeline, Not Just the Model

BytePlus VOD updated its release notes on March 10, 2026 with new video enhancement tiers and custom bitrate control. Combined with its current subtitle and workflow tooling, this suggests a stronger story for Chinese AI video infrastructure after generation, not only during generation.

ElevenLabs vs Retell 2026: Full-Stack Voice AI or Telephony-First Middleware?

ElevenLabs published an official comparison with Retell in the week of March 17, 2026. This guide explains the real tradeoff: integrated voice infrastructure versus telephony-first orchestration, and when each architecture makes more sense.

PixVerse 2026: Why China's Most Interesting Video Company May Not Be Betting on One Model

PixVerse's March 12 funding announcement and March 13 CLI launch point to a different strategy from most AI video companies: not just building one flagship model, but becoming a model mall, real-time engine, and developer workflow layer at the same time.

SkyReels V4 2026: Why China's Hottest Video Model Matters More Than the #1 Claim

Chinese media on March 19 began framing SkyReels V4 as a new global leader after the latest Artificial Analysis update. The more durable story is not only the headline rank, but SkyReels V4's shift toward AI drama, joint video-audio generation, and unified editing.

Eleven v3 Guide 2026: Audio Tags, Dialogue Mode, and When Not to Use It

ElevenLabs updated its Eleven v3 page on March 14, 2026 to say the model is no longer in alpha and is now generally available. This guide explains what changed, where v3 is actually strong, and when builders should still stay on v2.5 Turbo or Flash.

ElevenLabs Flows Guide 2026: Build Repeatable Creative Pipelines in One Canvas

ElevenLabs updated its Flows announcement on March 11, 2026. This guide explains why Flows matters, how the node-based canvas changes creative operations, and where it fits for batch testing, reusable pipelines, and multi-model production.

ElevenLabs vs Vapi 2026: Full-Stack Voice Platform or Orchestration Layer?

ElevenLabs published a detailed comparison with Vapi on March 17, 2026. This guide explains the architecture tradeoff, why voice quality and latency are not the same problem, and when teams should choose a full-stack voice platform over orchestration.

Runway Labs Guide 2026: Why This March 11 Launch Matters Beyond Product Hype

Runway introduced Runway Labs on March 11, 2026 as an internal incubator for new products built on generative video and General World Models. This guide explains why that matters, what it signals, and how builders should interpret it.

Voice Agent for Docs Playbook 2026: What ElevenLabs Learned from 200 Calls a Day

ElevenLabs updated its docs-agent case study on March 14, 2026. This guide breaks down what actually worked: over 80% automated resolution in evaluation, 89% success in human validation, strict redirect rules, and prompt patterns that fit voice instead of chat.

ElevenLabs Agents Guide 2026: Lower Latency, Expressive Voices, and Real Deployment Controls

ElevenLabs introduced Agents on March 6, 2026, reframing its voice platform around talk, type, and action across phone, web, and apps. Learn how Agents, Conversational AI 2.0, and Expressive Mode fit together in real production workflows.

ElevenLabs Scribe v2 Guide 2026: Better Diarization, Live API Expansion, and Lower Cost

ElevenLabs launched Scribe v2 on March 11, 2026 with higher accuracy in 99 languages, 98% speaker label accuracy, better turn-level timestamps, and pricing 40% lower than before. Learn what changed and how to use it for captions and transcripts.

HeyGen Video Agent Guide 2026: Prompt-to-Video, ChatGPT, and Lower API Costs

HeyGen expanded Video Agent in February 2026 with Prompt-to-Video in the API, ChatGPT video creation, and lower pricing later in the month. Learn what changed, how it works, and when to use it in an AI video workflow.

Krea Edit Guide 2026: Change Backgrounds, Swap Objects, and Restyle Images

Krea launched Krea Edit on March 9, 2026, letting users change backgrounds, swap objects, and restyle images with simple text prompts. Learn what it does, when it beats full regeneration, and how to use it in a practical image workflow.

Krea Image to Prompt Guide 2026: Reverse-Engineer Visual References Faster

Krea launched Image to Prompt on March 5, 2026. It analyzes an image and writes a 30 to 100 word prompt describing medium, style, composition, objects, geometry, and typography. Learn when it helps and where it does not.

Krea Prompt-to-Workflow Guide 2026: Turn One Sentence Into an Editable Pipeline

Krea launched Prompt to Workflow on February 10, 2026, letting users describe a visual task in one sentence and have the workflow update around it. Learn when this beats manual node editing, where it breaks, and how to use it well.

Midjourney Personalization Guide 2026: Moodboards, Ranking, and Web Updates

Midjourney updated Personalization on February 26, 2026 with improved ranking and stronger moodboard support on the web. Learn what changed, how to train it faster, and when to use it in real creative workflows.

Runway Characters Guide 2026: Build Real-Time Conversational Avatars

Runway launched Characters on March 9, 2026 as a real-time video agent API. Learn what it does, how to set up a character from a single image, and where it fits in AI avatar workflows.

Runway Third-Party Models Guide 2026: Kling, Sora 2 Pro, WAN, and GPT-Image in One Workspace

Runway added third-party models on February 20, 2026, including Kling 3.0, WAN2.2 Animate, GPT-Image-1.5, and Sora 2 Pro. Learn why this matters and how to use one workspace to compare model fit faster.

Sora Extensions Guide 2026: Extend Videos and Animate People

OpenAI shipped Sora Extensions on February 9, 2026 and image-to-video with people on February 4, 2026. Learn what changed, how to use both features, and where they fit in a real video workflow.

Suno Studio 1.2 Guide 2026: Remove FX, Warp Markers, Alternates, and Time Signatures

Suno launched Studio 1.2 on February 6, 2026 with Remove FX, Warp Markers with Quantize, Alternates, and Time Signature support. Learn what changed and how to use it in a practical music workflow.

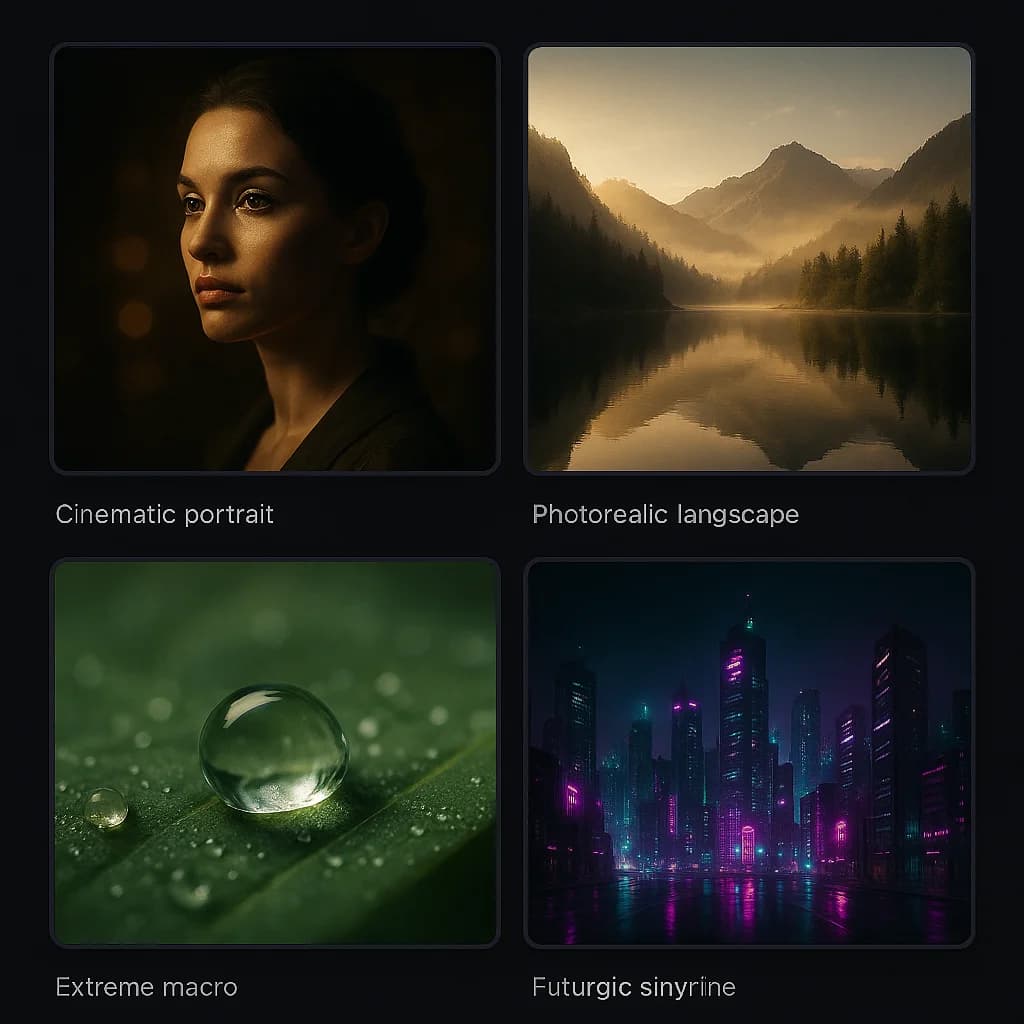

Best AI Image Generators 2026: Midjourney, FLUX.2, GPT Image Compared

Compare the best AI image generators in 2026. Midjourney v7, FLUX.2, GPT Image 1.5, and Stability AI image models ranked by quality, control, pricing, and real workflow fit.

Midjourney v7 vs Flux 2: Which AI Image Generator Is Better?

In-depth comparison of Midjourney v7 and Flux 2 in 2026. Compare image quality, text rendering, pricing, API access, and customization side by side.

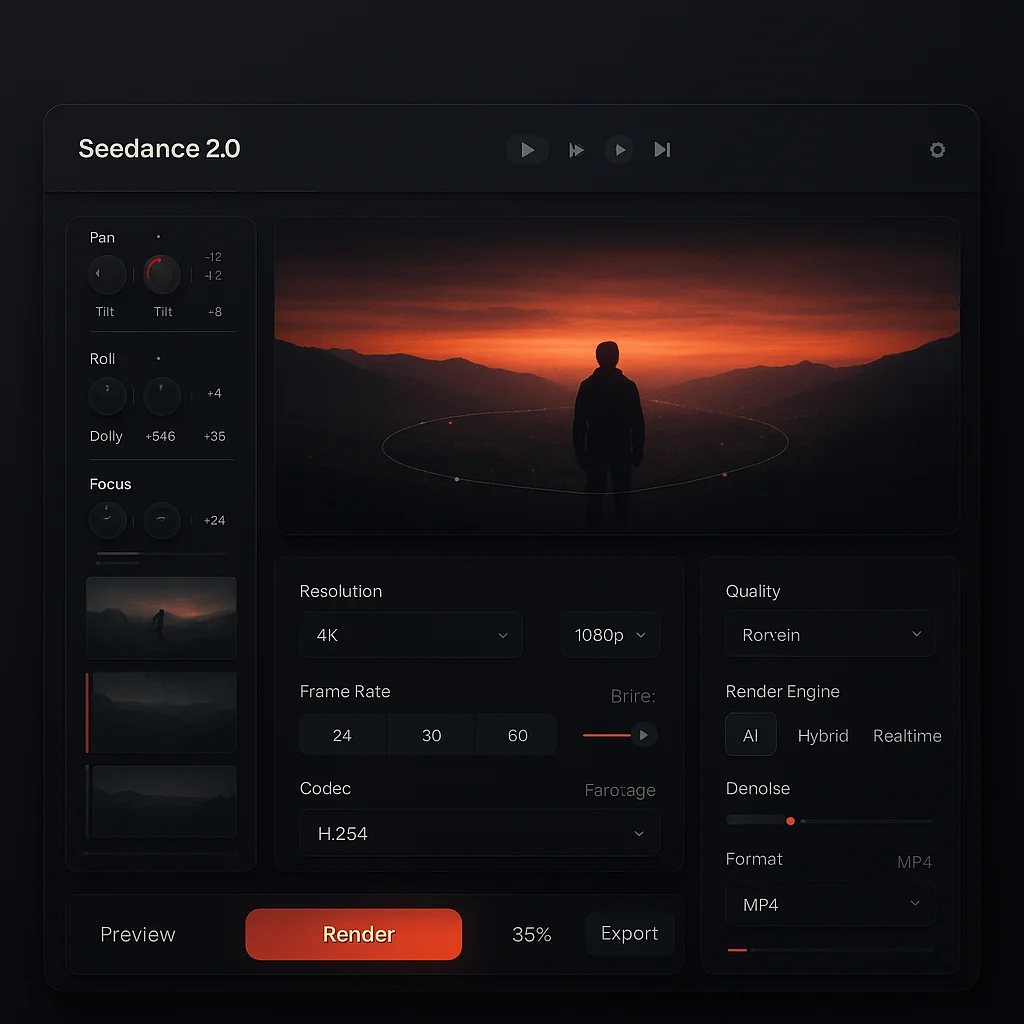

Seedance AI: Complete Guide to ByteDance's Video Generator (Updated April 2026)

Everything about Seedance AI - ByteDance's video generator with Director Mode, 4K output, face-lock, and lip sync. Updated after the April 2, 2026 API public beta news.

Sora 2 vs Kling 3.0: Which AI Video Generator Wins in 2026?

In-depth comparison of OpenAI Sora 2 and Kuaishou Kling 3.0 in 2026. Compare video quality, duration, pricing, multi-shot editing, and use cases side by side.

Suno v5 vs Udio 2: AI Music Generator Showdown 2026

In-depth comparison of Suno v5 and Udio 2 in 2026. Compare music quality, vocals, pricing, genre coverage, and commercial licensing side by side.

AI Prompts for Creating Viral Videos on YouTube: 50+ Templates (2026)

Master AI video prompts for YouTube. 50+ ready-to-use prompt templates for Shorts, tutorials, product demos, and storytelling. Works with Seedance, Sora, and Kling.

Seedance 1.0 Pro to 2.0: Migration Guide for 2026

How to migrate old Seedance 1.0 Pro workflows to Seedance 2.0 without relying on outdated pricing, endpoint, or free-tier assumptions.

Claude Code Skills for Video Production: SKILL.md Tutorial & Automation Guide

Learn how Claude Code skills automate video production. Set up SKILL.md files to chain script generation, video creation, editing, and publishing into a single command.

AI Video Pipeline: Complete Production Guide (2026)

Master the full 9-stage AI video production workflow. From script to publication, learn the tools, costs, and automation strategies for every pipeline stage.

Best AI Video Tools 2026: Complete Comparison by Category

Compare the best AI video generators of 2026 across quality, pricing, API access, and ease of use. Includes Seedance, Sora, Kling, Runway, Pika, and more.

Character Consistency in AI Video: How to Keep Characters Looking the Same

Solve the biggest challenge in AI video: maintaining character consistency across shots. Learn 4 proven methods including reference images, LoRA training, and prompt anchoring.

Seedance 2.0 API Guide: What Changed on April 2, 2026

Updated April 3, 2026: Seedance 2.0 API is now better understood through Volcengine / ModelArk enterprise access, separate from CapCut / Dreamina consumer rollout and uneven overseas API availability.

Seedance 2.0 Tutorial: Complete Guide to ByteDance's AI Video Generator (2026)

Learn how to use Seedance 2.0 for AI video generation. Step-by-step tutorial covering setup, prompts, API integration, and best practices.

Seedance Free in 2026: What Still Works After the April 2 Update

Updated April 2, 2026: Seedance free access is now channel-based. Here is what we can verify about Dreamina, CapCut, and consumer trial access versus the China enterprise API beta.

Seedance Pricing 2026: Public Beta, API Billing, and Free Access Update

Updated April 2, 2026: what changed for Seedance 2.0 pricing after the Volcengine API public beta, token-based billing, and region-specific consumer access.

Seedance Prompt Guide: 50+ Templates for AI Video (2026)

Write effective Seedance 2.0 prompts with 50+ templates, the SCELA framework, common mistakes, and advanced techniques.

Seedance vs Kling 2026: Short-Form Precision vs Longer Narrative Range

Updated 2026 comparison of Seedance 2.0 and Kling AI. Compare quality, pricing visibility, access paths, API reality, and best-fit workflows.

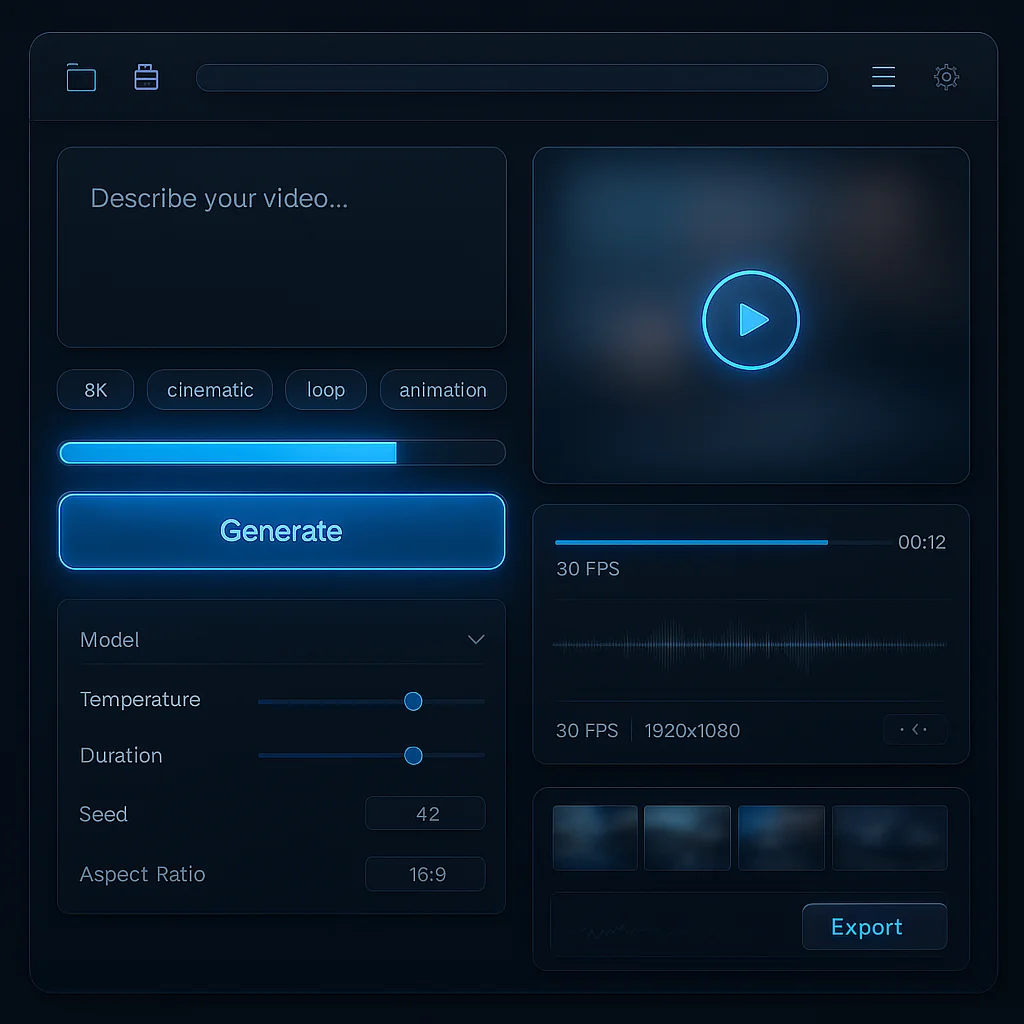

Seedance vs Runway 2026: Which AI Video Path Fits Better Today?

Updated April 2026. Compare Seedance 2.0 and Runway Gen-4 through short-form quality, creative control, access paths, API reality, and how readable pricing is right now.

Seedance vs Sora 2026: Which AI Video Path Makes More Sense Now?

Updated April 2026 comparison of Seedance 2.0 and OpenAI Sora 2. Compare short-form quality, access paths, API status, and long-term workflow fit.